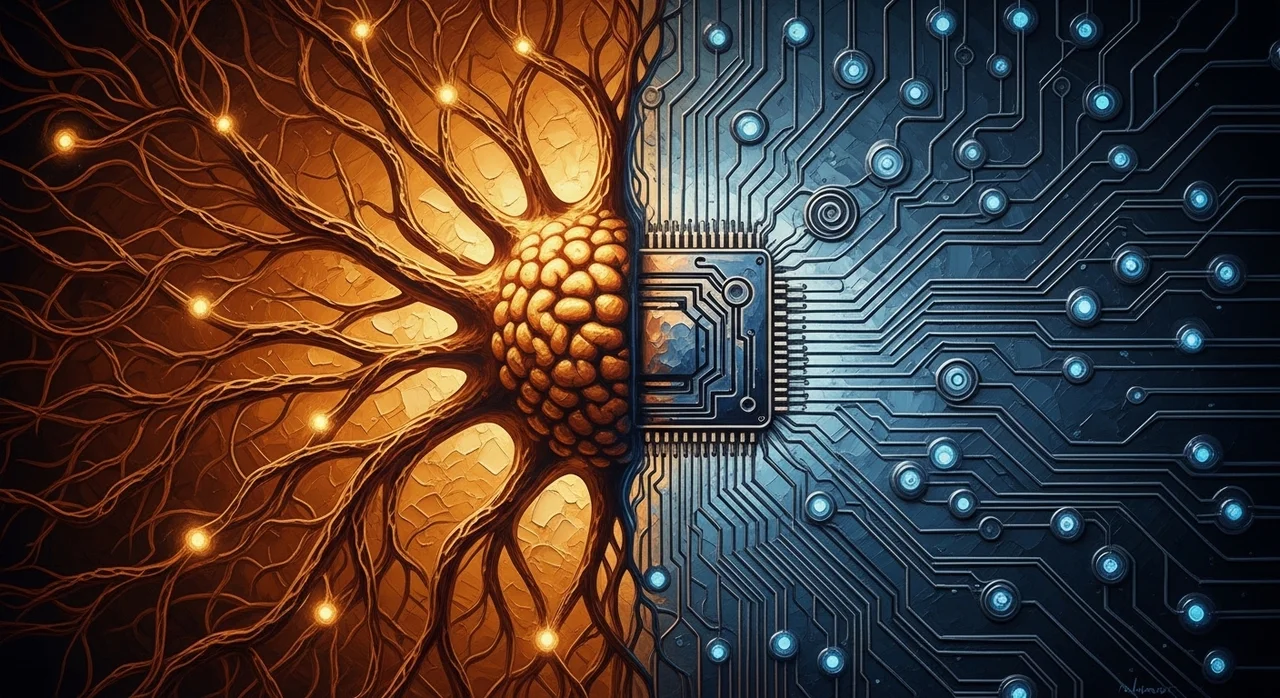

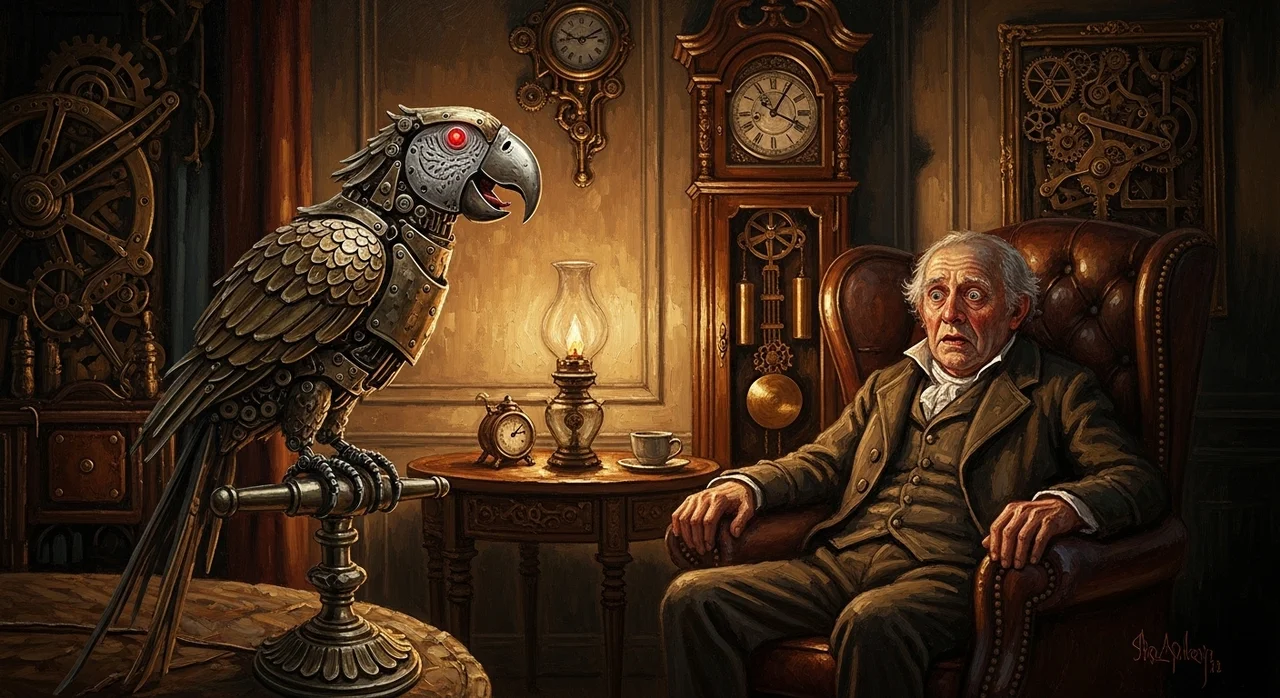

Consciousness Across Substrates: From Animals to Plants to Machines

If consciousness can arise in bird brains, octopus arms, and possibly insect central complexes, what principled reason exists to exclude artificial systems? The evidence is more complex than you think.